To run the tests locally you need to be authenticated and have a project created on that account. Write to BigQuery with explicit (non-default) credentials # service account credentials creds_dict = res = to_gbq ( ddf, project_id = "my_project_id", dataset_id = "my_dataset_id", table_id = "my_table_name", credentials = credentials, ) Run tests locally After the job is done, the intermediary data is deleted. You can provide a diferent bucket name by setting the parameter: bucket="my-gs-bucket". to_gbq ( ddf, project_id = "my_project_id", dataset_id = "my_dataset_id", table_id = "my_table_name", )īefore loading data into BigQuery, to_gbq writes intermediary Parquet to a Google Storage bucket. timeseries ( freq = "1min" ) res = dask_bigquery. head () Example: write to BigQuery Write to BigQuery with default credentialsĪssuming that client and workers are already provisioned with default credentials: import dask import dask_bigquery ddf = dask. read_gbq ( project_id = "your_project_id", dataset_id = "your_dataset", table_id = "your_table", ) ddf. import dask_bigquery ddf = dask_bigquery. Example: read from BigQueryĭask-bigquery assumes that you are already authenticated. You can set the Application Default Credentials to the service account key using the GOOGLE_APPLICATION_CREDENTIALS environment variable: $ export GOOGLE_APPLICATION_CREDENTIALS =/home//google.jsonįor information on obtaining the credentials, use Google API documentation.

For settings where this isn't possible, you'll need to create a service account. User credentials require interactive login. When running code locally, you can set this to use your user credentials by running $ gcloud auth application-default login The minimal permissions to cover reading and writing:īy default, dask-bigquery will use the Application Default Credentials. BigQuery Data Viewer, BigQuery Data Editor, or BigQuery Data OwnerĪlternately, BigQuery Admin would give you full access to sessions and data.įor writing to BigQuery, the following roles are sufficient:.

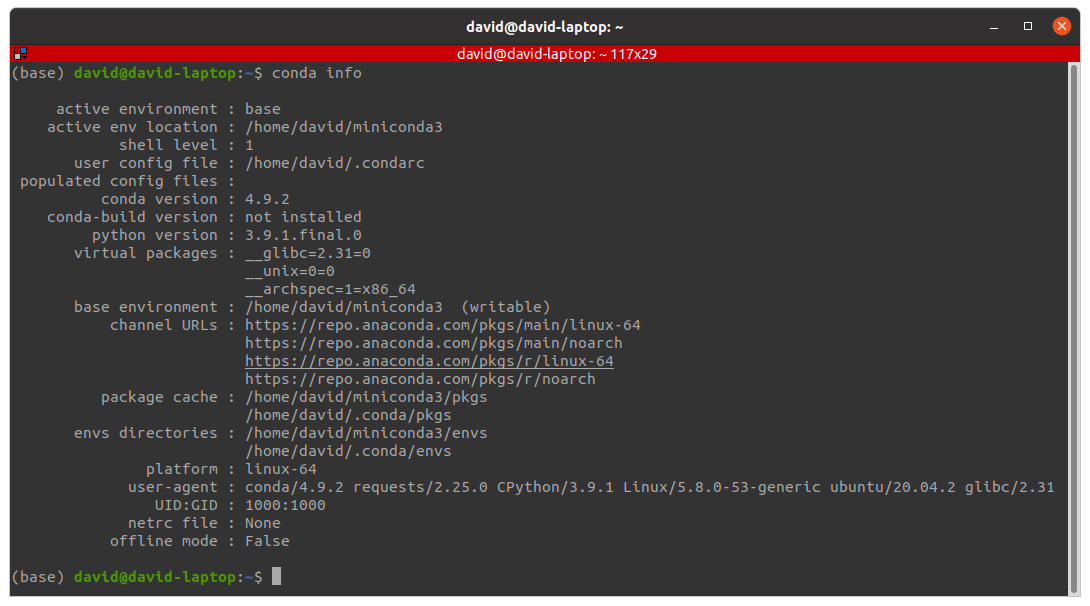

Or with conda: conda install -c conda-forge dask-bigqueryįor reading from BiqQuery, you need the following roles to be enabled on the account: Installationĭask-bigquery can be installed with pip: pip install dask-bigquery Please refer to the data extraction pricing table for associated costs while using Dask-BigQuery. This package uses the BigQuery Storage API. Read/write data from/to Google BigQuery with Dask.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed